Sabado, Hulyo 30, 2011

Do you understand?

NAPIER'S BONE

SLIDE RULE

PASCALINE

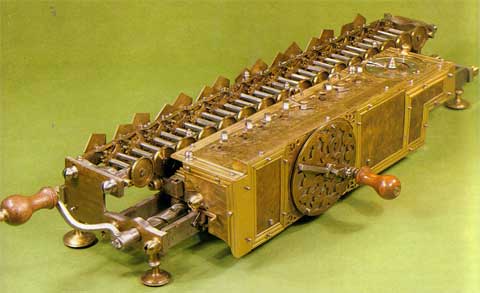

STEP RECKONER

DIFFERENCE ENGINE

ANALYTICAL ENGINE

HOLLERITH'S TABULATING MACHINE

MARK 1

ENIAC

UNIVAC

Charles Babbage produced a design for a mechanical computation engine back in the nineteenth century. The precision he demanded of the engineering was not possible at the time so it was not completed. Modern computing involves electronic circuits of one kind or another. Blaise Pascal, a French Mathematician, produced an idea for a digital computer at about the same time.

An electromechanical machine called Collossus was used in the Second World War to crack the Enigma encryption codes used by the German Army. It was so good that it could crack Enigma codes faster than a modern PC with a Pentium processor

The earliest fully electronic computer was built by the US Navy during the Second World War under the supervision of Grace Hopper. It was she who coined the phrase “bug” for a program error, when a moth shorted out a couple of wires. It was she who invented the first computing language, ATP, and she verified the language COBOL which was widely used in industry. She was at the forefront of computer development. As an officer of the Naval Reserve, she achieved the rank of Admiral.

All these early machines were huge, needed large amounts of electricity to keep them going, and specialised people to run them, and interpret the output. Programming them was difficult as they needed to use machine code, the series of 1’s and 0’s that the computer uses. Finding errors was time consuming and difficult.

An American company, International Business Machines, was one of the first to go into commercial production of computers. Its Chairman was famously quoted that he reckoned that there was a market for four or five of these machines worldwide. The main problem was that these machines used thermionic valves (“toobs”) in their electronics. They used a vast amount of electricity and were none too reliable.

When the transistor was invented and perfected in the mid nineteen-fifties, the complex circuitry within a computer could be made into a manageable size. Contrary to expectations, the computer rapidly became a “must have” for large corporations. These were vast machines called mainframes which needed large buildings and a good number of specialised staff to operate them. COBOL (Common Business Oriented Language) was a common language used by these machines, along with the EBCDIC character codes. The picture shows a mainframe:

Mainframes are still in use in some corporations because of the huge sums of money invested. However many mainframes have less computing power than PCs and only survive due to the economics.

Mini-computers are smaller versions of mainframes. Some of these were based on the analogue concept, in which the operational amplifier was at the heart. Analogue computing is almost completely unknown nowadays.

The microcomputer is the generic name for a number of different kinds of small computer, of which the most common is now the PC. When microcomputers came in, in the late seventies, they had taken advantage of the rapidly falling price of integrated circuit chips. Some of these were designed at the home market. Examples include the Sinclair Spectrum, and the Amiga.

The British Broadcasting Corporation sponsored the design of a microcomputer which became very common in schools. The picture below shows a BBC microcomputer.

These machines had a very simple operating system, and were easy to run. Many people used them to write their own software; there was little commercially available software. A number of teenagers made a lot of money by producing software in their bedrooms.

The graphics were crude, and there was little memory. Auxiliary storage was on 5 inch floppy disks that were even cruder than present floppy disk. Some programs were even recoded on audio cassette. Sometimes the programs were broadcast on the radio. The transmission sounded like a nest of angry bees. Some software was available on pre-programmed chip. Access to these was remarkably easy. For Interword, a word processor, you typed “*IW.”. The BBC was very good at:

- Data-logging

- Word-processing

- Spreadsheets

The old BBCs were very reliable. If the programs did go wrong, the computer could be restored by pressing the BREAK key, and the program could be reloaded by pressing SHIFT + BREAK. BBC computers still are in use in some schools. The BBC was overtaken by the Archimedes which, in its later versions could be configured to run like a PC.

Martes, Hulyo 26, 2011

Raju takes an unexpected approach for an interview for a corporate job whilst Farhan decides to pursue his love of photography. The two friends succeed with their tasks and this further enfuriates Virus, causing him to come up with a plan that will jeopardize Raju's job. During this process, Pia overhears this and decides to help Rancho and Farhan by providing them with the keys to her father's office. However, Virus catches them and expels them on the spot. After that Pia angrily confronts him, revealing that his son, Pia's brother, committed suicide when he could not get into ICE, like Virus wanted him to. At the same time Viru's pregnant elder daughter Mona (Mona Singh) goes into labour. A heavy storm cuts all power and traffic, and Pia is in self-imposed exile because of her revealing of her brother's actions. She instructs Rancho to deliver the baby in the college common room via VOIP. After the baby is apparently stillborn, Rancho resuscitates the baby. Virus reconciles with Rancho and his friends, and allows the trio to stay for their final exams.

Lunes, Hulyo 25, 2011

Assignment #6

A computer is a programmable machine designed to sequentially and automatically carry out a sequence of arithmetic or logical operations. The particular sequence of operations can be changed readily, allowing the computer to solve more than one kind of problem.

Conventionally a computer consists of some form of memory for data storage, at least one element that carries out arithmetic and logic operations, and a sequencing and control element that can change the order of operations based on the information that is stored. Peripheral devices allow information to be entered from an external source, and allow the results of operations to be sent out.

A computer's processing unit executes series of instructions that make it read, manipulate and then store data. Conditional instructions change the sequence of instructions as a function of the current state of the machine or its environment.

The first electronic computers were developed in the mid-20th century (1940–1945). Originally, they were the size of a large room, consuming as much power as several hundred modern personal computers (PCs).[1]

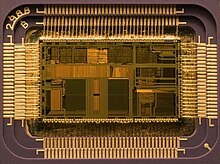

Modern computers based on integrated circuits are millions to billions of times more capable than the early machines, and occupy a fraction of the space.[2] Simple computers are small enough to fit into mobile devices, and mobile computers can be powered by small batteries. Personal computers in their various forms are icons of the Information Age and are what most people think of as "computers". However, the embedded computers found in many devices from mp3 players to fighter aircraft and from toys to industrial robots are the most numerous.

The history of the modern computer begins with two separate technologies—automated calculation and programmability—but no single device can be identified as the earliest computer, partly because of the inconsistent application of that term. A few devices are worth mentioning though, like some mechanical aids to computing, which were very successful and survived for centuries until the advent of the electronic calculator, like the Sumerian abacus, designed around 2500 BC[4] which descendant won a speed competition against a modern desk calculating machine in Japan in 1946,[5] the slide rules, invented in the 1620s, which were carried on five Apollo space missions, including to the moon[6] and arguably the astrolabe and the Antikythera mechanism, an ancient astronomical computer built by the Greeks around 80 BC.[7] The Greek mathematician Hero of Alexandria (c. 10–70 AD) built a mechanical theater which performed a play lasting 10 minutes and was operated by a complex system of ropes and drums that might be considered to be a means of deciding which parts of the mechanism performed which actions and when.[8] This is the essence of programmability.

Around the end of the tenth century, the French monk Gerbert d'Aurillac brought back from Spain the drawings of a machine invented by the Moors that answered Yes or No to the questions it was asked (binary arithmetic).[9] Again in the thirteenth century, the monks Albertus Magnus and Roger Bacon built talking androids without any further development (Albertus Magnus complained that he had wasted forty years of his life when Thomas Aquinas, terrified by his machine, destroyed it).[10]

In 1642, the Renaissance saw the invention of the mechanical calculator,[11] a device that could perform all four arithmetic operations without relying on human intelligence.[12] The mechanical calculator was at the root of the development of computers in two separate ways ; initially, it is in trying to develop more powerful and more flexible calculators[13] that the computer was first theorized by Charles Babbage[14][15] and then developed,[16] leading to the development of mainframe computers in the 1960s, but also the microprocessor, which started the personal computer revolution, and which is now at the heart of all computer systems regardless of size or purpose,[17] was invented serendipitously by Intel[18] during the development of an electronic calculator, a direct descendant to the mechanical calculator.[19]

This portrait of Jacquard was woven in silk on a Jacquard loom and required 24,000 punched cards to create (1839). It was only produced to order. Charles Babbage owned one of these portraits ; it inspired him in using perforated cards in his analytical engine[21]It was the fusion of automatic calculation with programmability that produced the first recognizable computers. In 1837, Charles Babbage was the first to conceptualize and design a fully programmable mechanical computer, his analytical engine.[22] Limited finances and Babbage's inability to resist tinkering with the design meant that the device was never completed ; nevertheless his son, Henry Babbage, completed a simplified version of the analytical engine's computing unit (the mill) in 1888. He gave a successful demonstration of its use in computing tables in 1906. This machine was given to the Science museum in South Kensington in 1910.

In the late 1880s, Herman Hollerith invented the recording of data on a machine readable medium. Prior uses of machine readable media, above, had been for control, not data. "After some initial trials with paper tape, he settled on punched cards ..."[23] To process these punched cards he invented the tabulator, and the keypunch machines. These three inventions were the foundation of the modern information processing industry. Large-scale automated data processing of punched cards was performed for the 1890 United States Census by Hollerith's company, which later became the core of IBM. By the end of the 19th century a number of ideas and technologies, that would later prove useful in the realization of practical computers, had begun to appear: Boolean algebra, the vacuum tube (thermionic valve), punched cards and tape, and the teleprinter.

During the first half of the 20th century, many scientific computing needs were met by increasingly sophisticated analog computers, which used a direct mechanical or electrical model of the problem as a basis for computation. However, these were not programmable and generally lacked the versatility and accuracy of modern digital computers.

Alan Turing is widely regarded to be the father of modern computer science. In 1936 Turing provided an influential formalisation of the concept of the algorithm and computation with the Turing machine, providing a blueprint for the electronic digital computer.[24] Of his role in the creation of the modern computer, Time magazine in naming Turing one of the 100 most influential people of the 20th century, states: "The fact remains that everyone who taps at a keyboard, opening a spreadsheet or a word-processing program, is working on an incarnation of a Turing machine".[24]

The inventor of the program-controlled computer was Konrad Zuse, who built the first working computer in 1941 and later in 1955 the first computer based on magnetic storage.[26]

George Stibitz is internationally recognized as a father of the modern digital computer. While working at Bell Labs in November 1937, Stibitz invented and built a relay-based calculator he dubbed the "Model K" (for "kitchen table", on which he had assembled it), which was the first to use binary circuits to perform an arithmetic operation. Later models added greater sophistication including complex arithmetic and programmability.[27]

A succession of steadily more powerful and flexible computing devices were constructed in the 1930s and 1940s, gradually adding the key features that are seen in modern computers. The use of digital electronics (largely invented by Claude Shannon in 1937) and more flexible programmability were vitally important steps, but defining one point along this road as "the first digital electronic computer" is difficult.Shannon 1940 Notable achievements include.

Conventionally a computer consists of some form of memory for data storage, at least one element that carries out arithmetic and logic operations, and a sequencing and control element that can change the order of operations based on the information that is stored. Peripheral devices allow information to be entered from an external source, and allow the results of operations to be sent out.

A computer's processing unit executes series of instructions that make it read, manipulate and then store data. Conditional instructions change the sequence of instructions as a function of the current state of the machine or its environment.

The first electronic computers were developed in the mid-20th century (1940–1945). Originally, they were the size of a large room, consuming as much power as several hundred modern personal computers (PCs).[1]

Modern computers based on integrated circuits are millions to billions of times more capable than the early machines, and occupy a fraction of the space.[2] Simple computers are small enough to fit into mobile devices, and mobile computers can be powered by small batteries. Personal computers in their various forms are icons of the Information Age and are what most people think of as "computers". However, the embedded computers found in many devices from mp3 players to fighter aircraft and from toys to industrial robots are the most numerous.

History of computing

Main article: History of computing hardware

The first use of the word "computer" was recorded in 1613, referring to a person who carried out calculations, or computations, and the word continued with the same meaning until the middle of the 20th century. From the end of the 19th century onwards, the word began to take on its more familiar meaning, describing a machine that carries out computations.[3]Limited-function early computers

The Jacquard loom, on display at the Museum of Science and Industry in Manchester, England, was one of the first programmable devices.

Around the end of the tenth century, the French monk Gerbert d'Aurillac brought back from Spain the drawings of a machine invented by the Moors that answered Yes or No to the questions it was asked (binary arithmetic).[9] Again in the thirteenth century, the monks Albertus Magnus and Roger Bacon built talking androids without any further development (Albertus Magnus complained that he had wasted forty years of his life when Thomas Aquinas, terrified by his machine, destroyed it).[10]

In 1642, the Renaissance saw the invention of the mechanical calculator,[11] a device that could perform all four arithmetic operations without relying on human intelligence.[12] The mechanical calculator was at the root of the development of computers in two separate ways ; initially, it is in trying to develop more powerful and more flexible calculators[13] that the computer was first theorized by Charles Babbage[14][15] and then developed,[16] leading to the development of mainframe computers in the 1960s, but also the microprocessor, which started the personal computer revolution, and which is now at the heart of all computer systems regardless of size or purpose,[17] was invented serendipitously by Intel[18] during the development of an electronic calculator, a direct descendant to the mechanical calculator.[19]

First general-purpose computers

In 1801, Joseph Marie Jacquard made an improvement to the textile loom by introducing a series of punched paper cards as a template which allowed his loom to weave intricate patterns automatically. The resulting Jacquard loom was an important step in the development of computers because the use of punched cards to define woven patterns can be viewed as an early, albeit limited, form of programmability.

The Most Famous Image in the Early History of Computing[20]

This portrait of Jacquard was woven in silk on a Jacquard loom and required 24,000 punched cards to create (1839). It was only produced to order. Charles Babbage owned one of these portraits ; it inspired him in using perforated cards in his analytical engine[21]

In the late 1880s, Herman Hollerith invented the recording of data on a machine readable medium. Prior uses of machine readable media, above, had been for control, not data. "After some initial trials with paper tape, he settled on punched cards ..."[23] To process these punched cards he invented the tabulator, and the keypunch machines. These three inventions were the foundation of the modern information processing industry. Large-scale automated data processing of punched cards was performed for the 1890 United States Census by Hollerith's company, which later became the core of IBM. By the end of the 19th century a number of ideas and technologies, that would later prove useful in the realization of practical computers, had begun to appear: Boolean algebra, the vacuum tube (thermionic valve), punched cards and tape, and the teleprinter.

During the first half of the 20th century, many scientific computing needs were met by increasingly sophisticated analog computers, which used a direct mechanical or electrical model of the problem as a basis for computation. However, these were not programmable and generally lacked the versatility and accuracy of modern digital computers.

Alan Turing is widely regarded to be the father of modern computer science. In 1936 Turing provided an influential formalisation of the concept of the algorithm and computation with the Turing machine, providing a blueprint for the electronic digital computer.[24] Of his role in the creation of the modern computer, Time magazine in naming Turing one of the 100 most influential people of the 20th century, states: "The fact remains that everyone who taps at a keyboard, opening a spreadsheet or a word-processing program, is working on an incarnation of a Turing machine".[24]

The Zuse Z3, 1941, considered the world's first working programmable, fully automatic computing machine.

The ENIAC, which became operational in 1946, is considered to be the first general-purpose electronic computer.

The Atanasoff–Berry Computer (ABC) was among the first electronic digital binary computing devices. Conceived in 1937 by Iowa State College physics professor John Atanasoff, and built with the assistance of graduate student Clifford Berry,[25] the machine was not programmable, being designed only to solve systems of linear equations. The computer did employ parallel computation. A 1973 court ruling in a patent dispute found that the patent for the 1946 ENIAC computer derived from the Atanasoff–Berry Computer.

The inventor of the program-controlled computer was Konrad Zuse, who built the first working computer in 1941 and later in 1955 the first computer based on magnetic storage.[26]

George Stibitz is internationally recognized as a father of the modern digital computer. While working at Bell Labs in November 1937, Stibitz invented and built a relay-based calculator he dubbed the "Model K" (for "kitchen table", on which he had assembled it), which was the first to use binary circuits to perform an arithmetic operation. Later models added greater sophistication including complex arithmetic and programmability.[27]

A succession of steadily more powerful and flexible computing devices were constructed in the 1930s and 1940s, gradually adding the key features that are seen in modern computers. The use of digital electronics (largely invented by Claude Shannon in 1937) and more flexible programmability were vitally important steps, but defining one point along this road as "the first digital electronic computer" is difficult.Shannon 1940 Notable achievements include.

- Konrad Zuse's electromechanical "Z machines". The Z3 (1941) was the first working machine featuring binary arithmetic, including floating point arithmetic and a measure of programmability. In 1998 the Z3 was proved to be Turing complete, therefore being the world's first operational computer.[28]

- The non-programmable Atanasoff–Berry Computer (commenced in 1937, completed in 1941) which used vacuum tube based computation, binary numbers, and regenerative capacitor memory. The use of regenerative memory allowed it to be much more compact than its peers (being approximately the size of a large desk or workbench), since intermediate results could be stored and then fed back into the same set of computation elements.

- The secret British Colossus computers (1943),[29] which had limited programmability but demonstrated that a device using thousands of tubes could be reasonably reliable and electronically reprogrammable. It was used for breaking German wartime codes.

- The Harvard Mark I (1944), a large-scale electromechanical computer with limited programmability.[30]

- The U.S. Army's Ballistic Research Laboratory ENIAC (1946), which used decimal arithmetic and is sometimes called the first general purpose electronic computer (since Konrad Zuse's Z3 of 1941 used electromagnets instead of electronics). Initially, however, ENIAC had an inflexible architecture which essentially required rewiring to change its programming.

| Peripheral device (Input/output) | Input | Mouse, Keyboard, Joystick, Image scanner, Webcam, Graphics tablet, Microphone |

| Output | Monitor, Printer, Loudspeaker | |

| Both | Floppy disk drive, Hard disk drive, Optical disc drive, Teleprinter | |

| Computer busses | Short range | RS-232, SCSI, PCI, USB |

| Long range (Computer networking) | Ethernet, ATM, FDDI |

Software

Main article: Computer software

Software refers to parts of the computer which do not have a material form, such as programs, data, protocols, etc. When software is stored in hardware that cannot easily be modified (such as BIOS ROM in an IBM PC compatible), it is sometimes called "firmware" to indicate that it falls into an uncertain area somewhere between hardware and software.Programming languages

Main article: Programming language

Programming languages provide various ways of specifying programs for computers to run. Unlike natural languages, programming languages are designed to permit no ambiguity and to be concise. They are purely written languages and are often difficult to read aloud. They are generally either translated into machine code by a compiler or an assembler before being run, or translated directly at run time by an interpreter. Sometimes programs are executed by a hybrid method of the two techniques. There are thousands of different programming languages—some intended to be general purpose, others useful only for highly specialized applications.| Lists of programming languages | Timeline of programming languages, List of programming languages by category, Generational list of programming languages, List of programming languages, Non-English-based programming languages |

| Commonly used Assembly languages | ARM, MIPS, x86 |

| Commonly used high-level programming languages | Ada, BASIC, C, C++, C#, COBOL, Fortran, Java, Lisp, Pascal, Object Pascal |

| Commonly used Scripting languages | Bourne script, JavaScript, Python, Ruby, PHP, Perl |

Professions and organizations

As the use of computers has spread throughout society, there are an increasing number of careers involving computers.| Standards groups | ANSI, IEC, IEEE, IETF, ISO, W3C |

| Professional Societies | ACM, AIS, IET, IFIP, BCS |

| Free/Open source software groups | Free Software Foundation, Mozilla Foundation, Apache Software Foundation |

Mag-subscribe sa:

Mga Komento (Atom)